Automatically understanding funny moments (i.e., the moments that make people laugh) when

watching comedy is challenging, as they relate to various features, such as body language,

dialogues and culture.

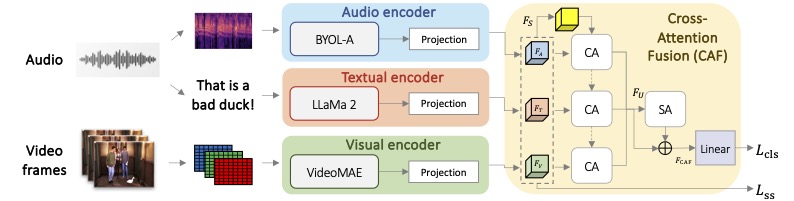

In this paper, we propose FunnyNet-W, a model that relies on cross- and self-attention for

visual, audio and text data to predict funny moments in videos. Unlike most methods that rely on

ground truth data in the form of subtitles, in this work we exploit modalities that come

naturally with videos: (a) video frames as they contain visual information indispensable for

scene understanding, (b) audio as it contains higher-level cues associated with funny moments,

such as intonation, pitch and pauses and (c) text automatically extracted with a speech-to-text

model as it can provide rich information when processed by a Large Language Model.

To acquire labels for training, we propose an unsupervised approach that spots and labels funny

audio moments.

We provide experiments on five datasets: the sitcoms TBBT, MHD, MUStARD, Friends, and the TED

talk UR-Funny.

Extensive experiments and analysis show that FunnyNet-W successfully exploits visual, auditory

and textual cues to identify funny moments, while our findings reveal FunnyNet-W's ability to

predict funny moments in the wild. FunnyNet-W sets the new state of the art for funny moment

detection with multimodal cues on all datasets with and without using ground truth information.