Musings

Neil Gaiman's best tips for survival in an artistic academic career

Even though there are some difference between art and the academia, it is quite striking how some of Neil Gaiman's advise to young artists mirror what I would tell my students (with at least one notable exception... :) ).

The secret to keeping fruitful collaborations [skip to quote]

You get work however you get work, but people keep working [..] because their work is good, and because they're easy to get along with, and because they deliver the work on time. And you don't even need all three... two out of three is fine!

- People will tolerate how unpleasant you are if the work is good and you deliver it on time.

- People will forgive the lateness of your work if it's good and they like you.

- And you don't have to be as good as everyone else if you're on time and it's always a pleasure to hear from you.

Neil Gaiman, commencement speech at the University of the Arts Class of 2012

Of course, we should aim for the three out of three, but the observation of two being sufficient is spot on in my experience (and represents a parsimonious and flattering explanation to my continued academic employement despite my chronic tardiness :) ).

On impostor syndrom [skip to quote]

The first problem of [..] success is the unshakable conviction that you are getting away with something and that, any moment now, they will discover you.

[..] I was convinced there would be a knock on the door, and a man with a clipboard [..] would be there to tell me it was all over and they'd caught up with me and now I would have to go and get a real job, one that didn't consist of making things up and writing them down, and reading books I wanted to read...

And then I would go away quietly and get the kind of job where I would have to get up early in the morning and wear a tie and not make things up anymore.

Neil Gaiman, commencement speech at the University of the Arts Class of 2012

I guess most academics can relate to that (generally undeservedly), especially under the grind of our recurrent evaluations (institutions, employers, funding bodies, peers...). Although some of us already wear a tie to start with...

On emails [skip to quote]

There was a day when I looked up and realized that I had become someone who profesionnally replied to emails and who wrote as a hobby.

Neil Gaiman, commencement speech at the University of the Arts Class of 2012

An increasing number of my colleagues adopt quite extreme measures when it comes to email management (eg checking their emails only twice a day, or only within certain time slices...). I think it is one of the great challenge faced by humanity in the information era, and something we'll have to teach our children (academic), to find ways to limit the flow of information requiring active sprocessing (haven't found an acceptable solution myself... still looking).

On cheating on your CV (not an endorsement! :) ) [skip to quote]

People get hired because, somehow, they get hired... In my case I did something which these days would be easy to check and would get me into a lot of trouble and when I started out in those pre-internet days seemed like a sensible career strategy. When I was asked by editors who I'd written for, I lied. I listed a handful of magazines that sounded likely and I sounded confident and I got jobs. I then made it a point of honor to have written something for each of the magazines I've listed to get that first job, so that I hadn't actually lied, I'd just been chronologically challenged.

Neil Gaiman, commencement speech at the University of the Arts Class of 2012

This one I do not condone, of course, but working hard to deserve in retrospect whatever undeserved advantage you get at some point is a great attitude to adopt (cf impostor syndrom).

On luck [skip to quote]

Luck is useful. Often you'll discover that the harder you work, and the more wisely that you work, the luckier you will get but there is luck, and it helps.

Neil Gaiman, commencement speech at the University of the Arts Class of 2012

Parting words [skip to quote]

Be wise, because the world needs more wisdom, and if you cannot be wise pretend to be someone who is wise and then just behave like they would.

Neil Gaiman, commencement speech at the University of the Arts Class of 2012

If the shoe fits... (aka Python's duck typing :) ).

(Canadian) pennies for your thoughts

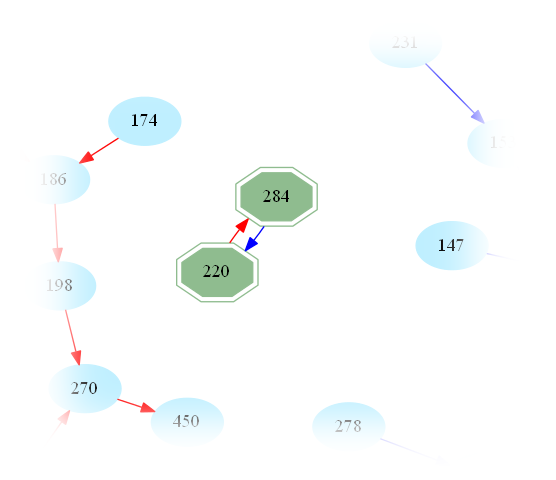

Background: In 2012, Canada decided to phase out the penny for its coinage system. Product prices may still use arbitrary cents (impractical otherwise since prices in Canada do not typically include taxes) but cash transactions are now rounded up/down to the closest multiple of 5 cents, as shown in the image below:

For instance, if two products A and B are worth 67¢ and 1\$21¢ respectively, one will have to pay 1\$80¢ to buy them together, instead of their accumulated price 1\$78¢. In this case, the seller makes an extra 2¢ in the transaction, thanks to the imperfect round-off. Mathematically speaking, it all depends on the remainder $r$ of the total price in a division by 5¢: If $r$ equals $1$ or $2$, than the seller looses $r$ cents, but if $r$ equals $3$ or $4$, then the seller makes an extra $5-r$ cents in the transaction.

With my wife, we were wondering: Can a supermarket manipulate the prices of its products in such a way that the rounded totals translate, on average, into an extra benefit for the company? We were not overly paranoid about it, but it seemed like a good opportunity to exercise our brain while strolling/bouldering (and, very occasionally, surfing) on the amazing beaches of Oahu's famed North shore...

As a first approximation, we can model the behavior of a shopper (oblivious to the imperfect change issue) as a Markov chain. In other words, given a list of products $A=\{a_i\}_{i=1}^k$, each associated with a price $p_i$, one chooses the $n$-th item $X_n$ with probability $${\mathbb P}(X_n=a\mid X_{n-1}\cdots X_{n-k})$$ which depends on his/her previous $k$ choices. On can then extend this Markov chain into remembering the remainder (modulo 5¢) $R_n$ of the total price after $n$ transactions. In this extended model, the probability of choosing a $n$-th item $X_n$ and ending with a remainder $R_n$, only depends on the previously chosen $k$-products, and the previous remainder $R_{n-1}$. With this in mind, the expected gain $G_n$ of the supermarket can now be written as $$ \mathbb{E}(G_n\mid A) = \sum_{a\in A}\left|\begin{array}{ll}+2\,{\mathbb P}((R_n,X_n)=(3,a)\mid R_{0}=0)\\+{\mathbb P}((R_n,X_n)=(4,a)\mid R_{0}=0)\\-{\mathbb P}((R_n,X_n)=(1,a)\mid R_{0}=0)\\-2\,{\mathbb P}((R_n,X_n)=(2,a)\mid R_{0}=0).\end{array}\right.$$ For small values of $n$, this expectation depends a lot on the list $A$ and its associated prices $p_i$. For instance if the customer buys a single item ($n=1$), the seller could set the remainder of all its prices to $3$ and make an easy additional 2¢ on each transaction.

However, for large values of $n$ (+assuming the ergodicity of the Markov chain), the stationary distribution can be assumed, i.e. the expected gain $\mathbb{E}(G_\infty\mid A)$ no longer depends on the initial state or, equivalently, on $n$. It is then possible to show that, no matter what the consummer preferences (i.e. the probabilities in the Markov chain), or the product list/prices $A$, one has $$\mathbb{E}(G_\infty\mid A)=0.$$ This can be proven thanks to a very elegant symmetry argument by James Martin on MathOverflow. In other words, there is simply no way for the supermarket to rig the game even if the consumer does not actively avoid being short-changed (but assuming that the consumer buys enough products, so the supermarket may and will still win in the end!).

What Feynman saw in a flower...

Because understanding the process does not necessarily make the result any less appealing! (otherwise, would there be any hope left for lasting companionship? ;) )

Beautiful work by Fraser Davidson.

If you haven't seen one of these...

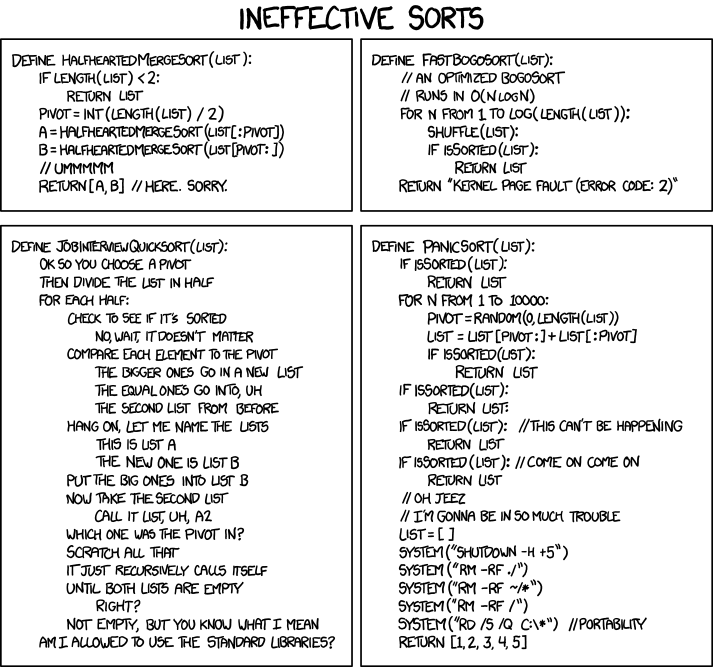

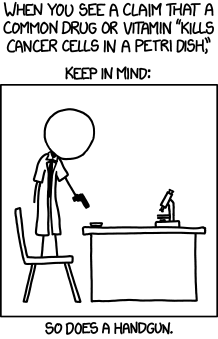

... then you obviously never TA'ed Bioinformatics ;) (Lucky bastard!). Full credits/kudos to XKCD.

On another note (and yes, this will be my last xkcd strip, otherwise my page would simply end up being a mirror):

Best Journal ever!!!

Ever complained about the academic daily routine having become a quest for the best journal that would nevertheless accept one's half-cooked manuscript? Ever felt that citations and impact factors were way too overrated, and that selectivity should prevail? Look no further, as it is my pleasure to share my recent discovery of (arguably) the best journal ever!

With an acceptance rate way lower than 1%, the journal of universal rejection may rightfully pride itself of enforcing the strictest of academic standards (Nature beware!). Created in 2009, the journal solicits submissions in poetry, prose, visual art, and research. Despite such a wide scope, its widely competent editorial board can be trusted to lay an expert eye on your submission, and helpfully motivate its final decision.

P vs NP: The irrefutable proof ;)

Today is the day I almost died laughing while reading Scott Aaronson's Shtetl-Optimized blog dedicated to Complexity Theory. Ok, I know, this sort of humor alone may be regarded as a conclusive diagnostic by future generations of psychopathologists, but I'd still like to share his beautiful, human-centric, argument for why P != NP. Basically, he is asked the question:

He starts by stating the question in four informal ways, one of which is:

and adds

I woke up the following morning at the hospital, and must be clinically monitored during any future access to Scott's blog, but I encourage you to check it out for a daily dose of CS wittiness.

Amicable numbers

Amicable numbers are members of the number theory zoology (See their Wikipedia page), which, like trousers and all sorts of useful stuff, come handy in pairs. Formally, one starts by defining the restricted divisor function s(n) to be the sum of all divisors of n, itself excepted.

For instance for n = 220, one finds

Then, amicable numbers are pairs of natural numbers (p,q) such that s(p) = q and s(q) = p.

To make this notion explicit, we get back to the example above and find

and therefore (220,284) are amicable numbers.

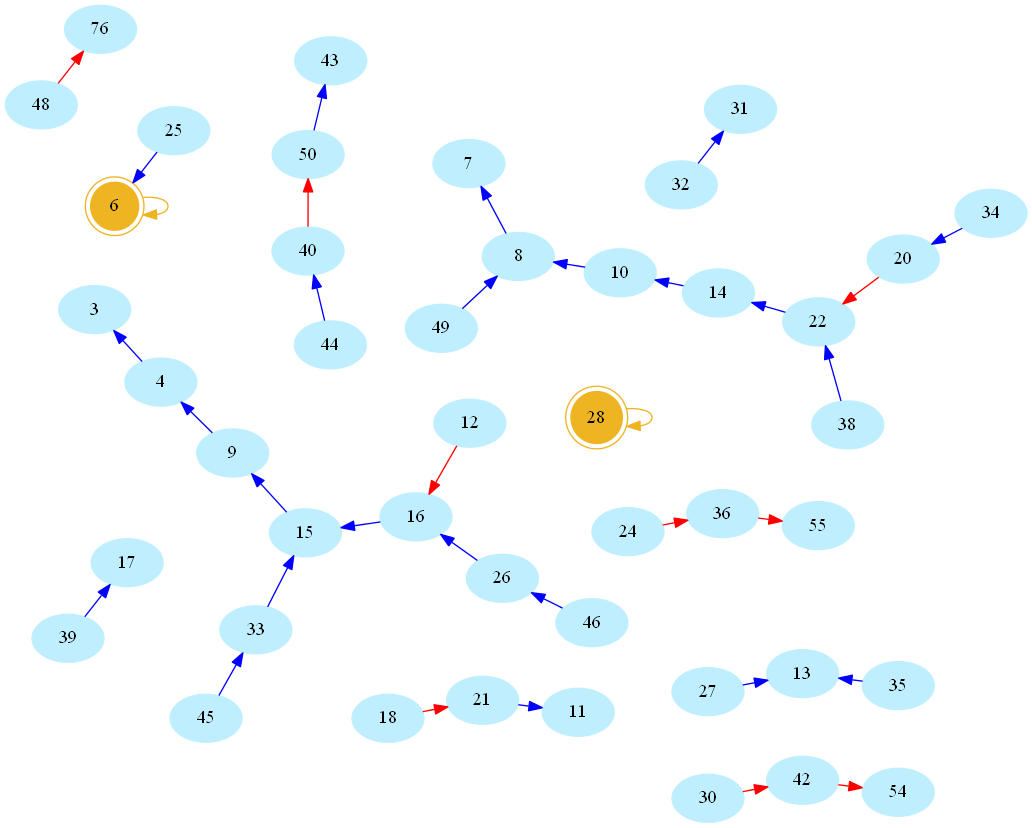

Now there is something about this definition that may sound arbitrarily limited to a computer scientist. Indeed, consider the (infinite) directed graph whose vertices are natural numbers, and whose edges are pairs (n,s(n)). Here is a picture, rendered using the allmighty GraphViz (neato mode), showing the resulting network/graph for numbers below 50 (blue/red arcs indicate decreasing/increasing values of s, number 1 omitted for readability, golden nodes are perfect numbers):

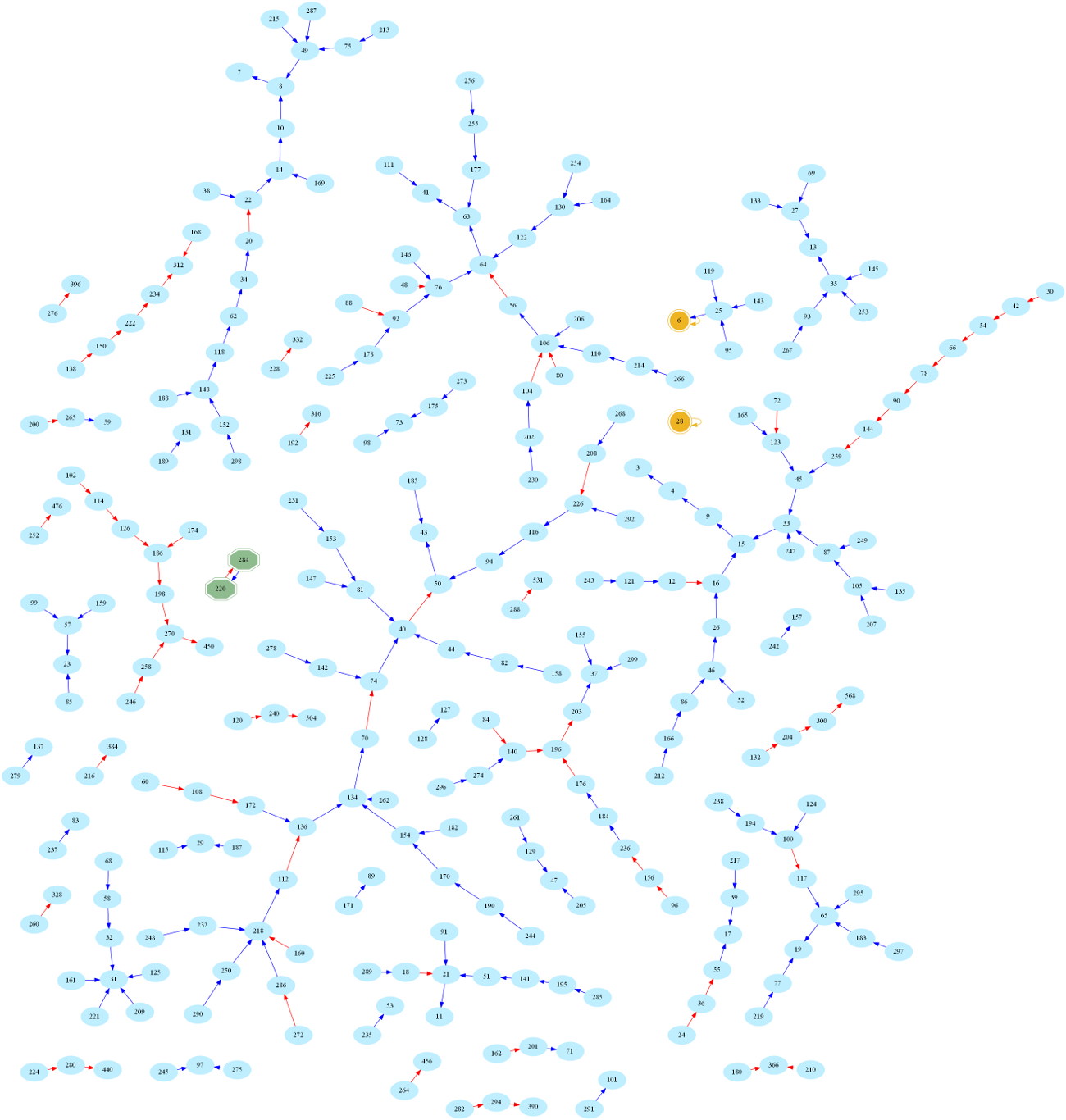

And here is the larger graph of numbers below 300 (Full-screen PDF version):

One then easily sees that amicable numbers are in bijection with cycles of length 2 in the graph. Indeed, zooming in on the above graph, one may easily spot the first amicable pair:

This raises the question

Indeed, one can alternatively, yet equivalently, define amicable numbers as pairs

which naturally generalizes into what is called sociable numbers, i.e. k-tuples of numbers

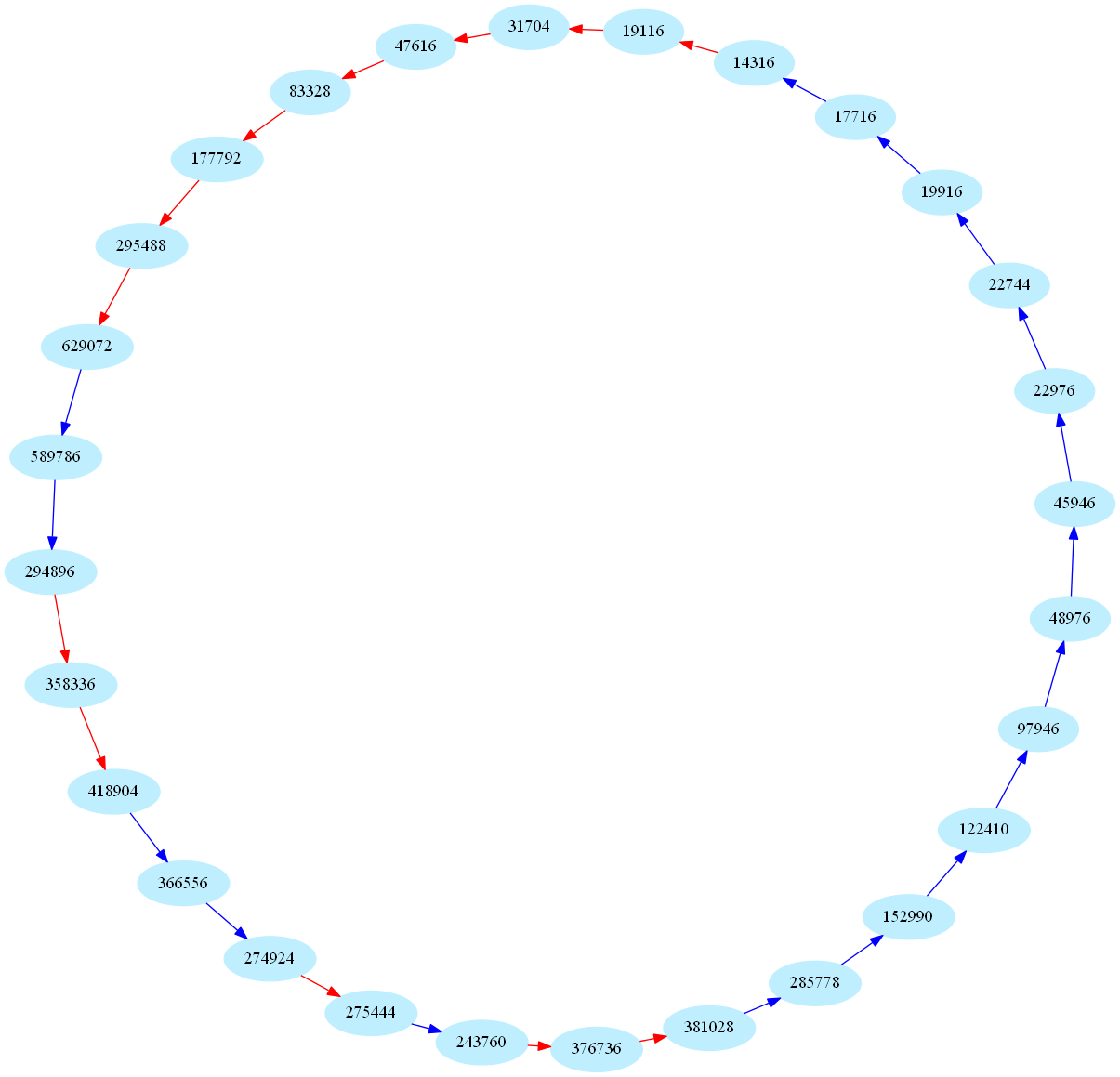

These sequences are also called periodic aliquot sequences. Here is one of the biggest periodic sequence known to date (k=28):

These numbers are currently of some interest in number theory:

For k=1, sociable numbers are perfect numbers.

For k=2, sociable numbers are amicable numbers.

In general, what are aliquot sequence of cycle length k? Do they even exist for large values of k?

Whether there exists a cycle-free infinite aliquot sequence is currently open!